Nathan Godey

NLP Postdoc · Cornell Tech

Nathan Godey

I'm a postdoc in Yoav Artzi's lab at Cornell Tech. Before that, I did my PhD at Inria ALMAnaCH, advised by Benoît Sagot and Éric de la Clergerie. I work at the intersection of NLP and representation learning, with a focus on understanding the representations language models build, and improving them.

Selected publications

See all →

Gaperon: A Peppered English-French Generative Language Model Suite

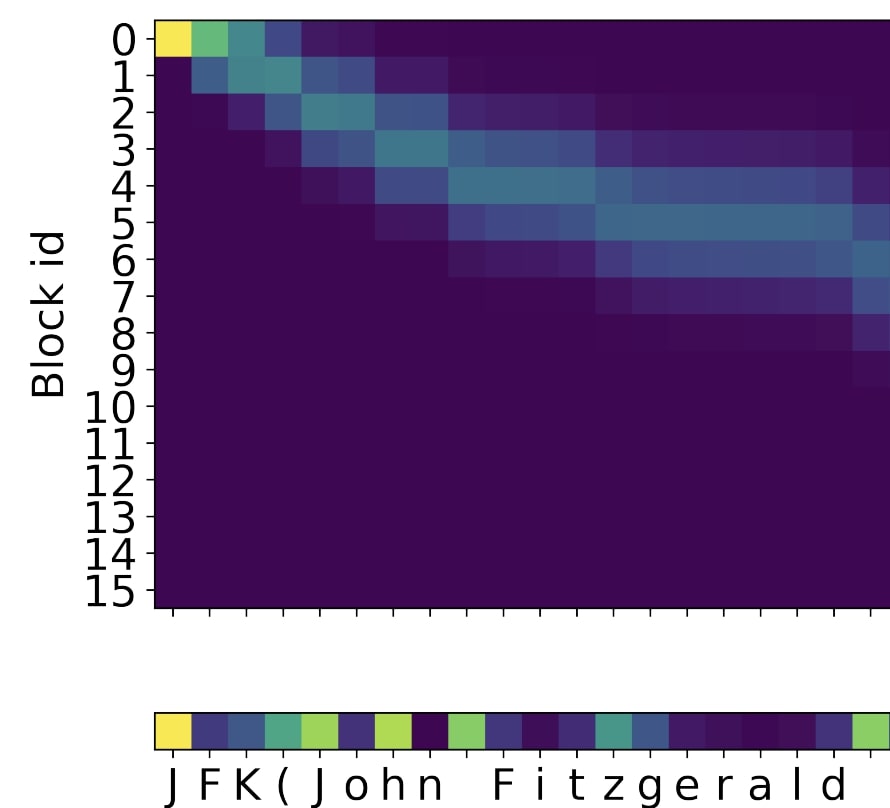

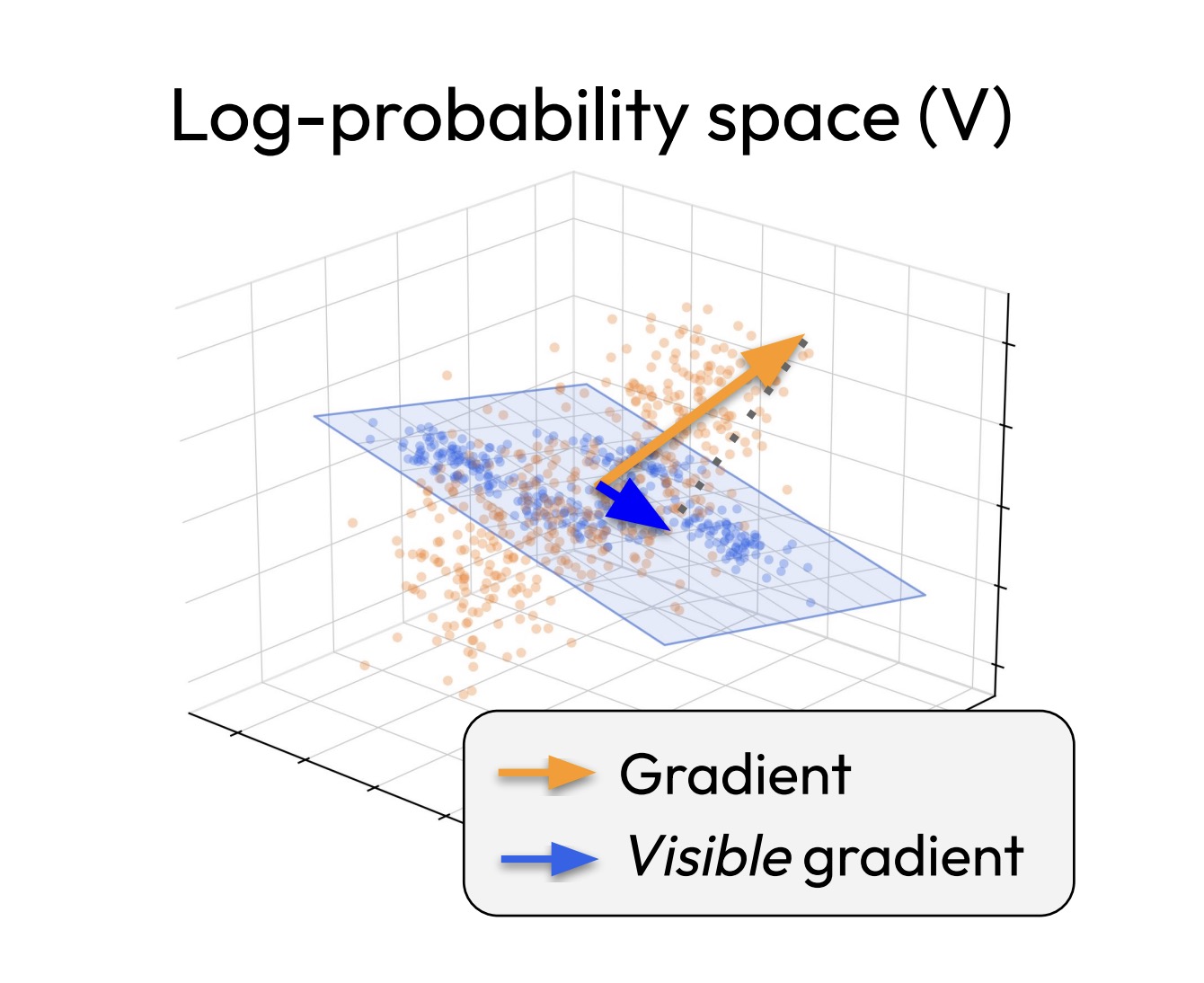

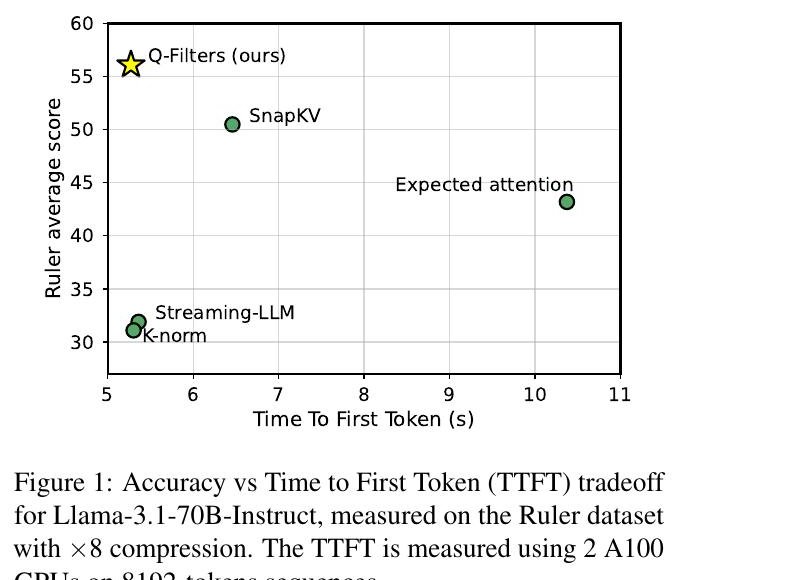

Q-Filters: Leveraging QK Geometry for Efficient KV Cache Compression

Improving Representations for Language Modeling (PhD thesis)

Why do small language models underperform? Studying Language Model Saturation via the Softmax Bottleneck

Headless Language Models: Learning without Predicting with Contrastive Weight Tying